Jump To Section

- 1 The Continuous Capability Model

- 2 Why Ignoring Continuous Learning is a Business Risk in 2026?

- 3 How Are AI and Automation Changing Design Capability Requirements?

- 4 How Are User Expectations Redefining Design Standards?

- 5 What Types of Learning Actually Build Enterprise Capability?

- 6 How Do Communities of Practice Prevent Fragmentation?

- 7 Designing a Learning Plan at the Organizational Level

- 8 Final Takeaways

Professional designers are continuous learners. That statement is often framed as encouragement for students, but in practice it is a strategic reality for any organization that depends on digital products and services to compete.

Professional design teams have always learned as their work evolves. What’s changed is the speed at which new tools, new interaction patterns, and new risks enter production environments, especially as AI and automation become embedded in key areas of business.

That makes capability drift: skills and standards slowly fall behind the environment, resulting in a business problem, not a personal development issue.

If your design capability is not continuously learning, it is slowly becoming outdated. In environments where customer experience, digital platforms, and data-driven services are tightly linked to revenue and trust, that is not a minor issue. It is a business risk.

This article reframes continuous learning as operational infrastructure: a managed capability that reduces risk, improves quality, and increases execution speed without sacrificing governance.

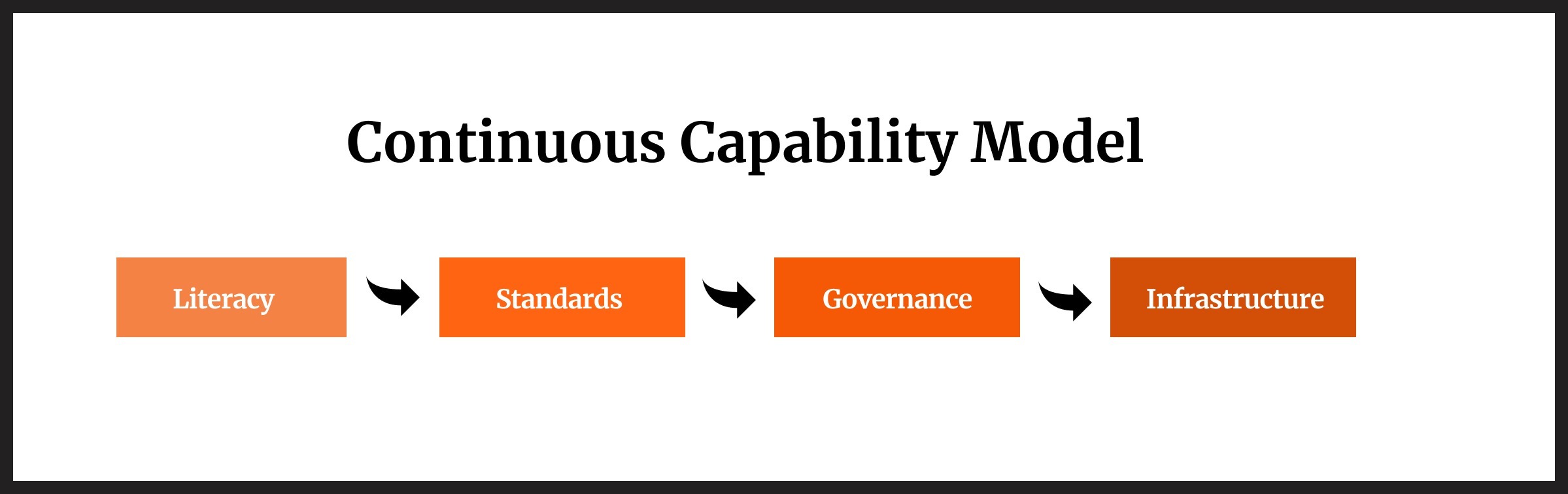

The Continuous Capability Model

To make continuous learning practical (and governable) at scale, use a simple framework:

- Literacy: baseline fluency in the tools, concepts, and risks shaping modern product work

- Standards: shared methods, patterns, and quality bars that prevent inconsistent execution

- Governance: guardrails for risk, compliance, accessibility, privacy, and responsible AI

- Infrastructure: the operating mechanisms (forums, time allocation, measurement, enablement) that make learning repeatable

You’ll see this model referenced throughout the sections below.

Why Ignoring Continuous Learning is a Business Risk in 2026?

Continuous learning is tightly related to business because design decisions directly affect revenue, compliance, and customer trust in digital-first environments.

When AI, automation, and user expectations evolve faster than internal capability, teams accumulate quality gaps, inconsistency, and unmanaged tool risk. Treating learning as optional increases operational fragility.

Design principles endure: clarity, hierarchy, usability, accessibility, human-centered thinking. But the environment in which those principles must operate changes constantly: platforms, channels, device behaviors, data constraints, and governance requirements.

History shows the pattern clearly.

When graphical user interfaces expanded computing beyond specialists, designers translated complexity into navigable systems.

When the web introduced new constraints (performance, browser variability, fluid layouts), UX methods and tooling changed.

Each shift didn’t invalidate fundamentals; it changed the context and the expectations placed on teams.

The current shift is different in one important way: automation increases the speed and scale at which design decisions propagate. When content, layouts, flows, and recommendations can be generated or personalized rapidly, the blast radius of inconsistent standards and weak governance grows.

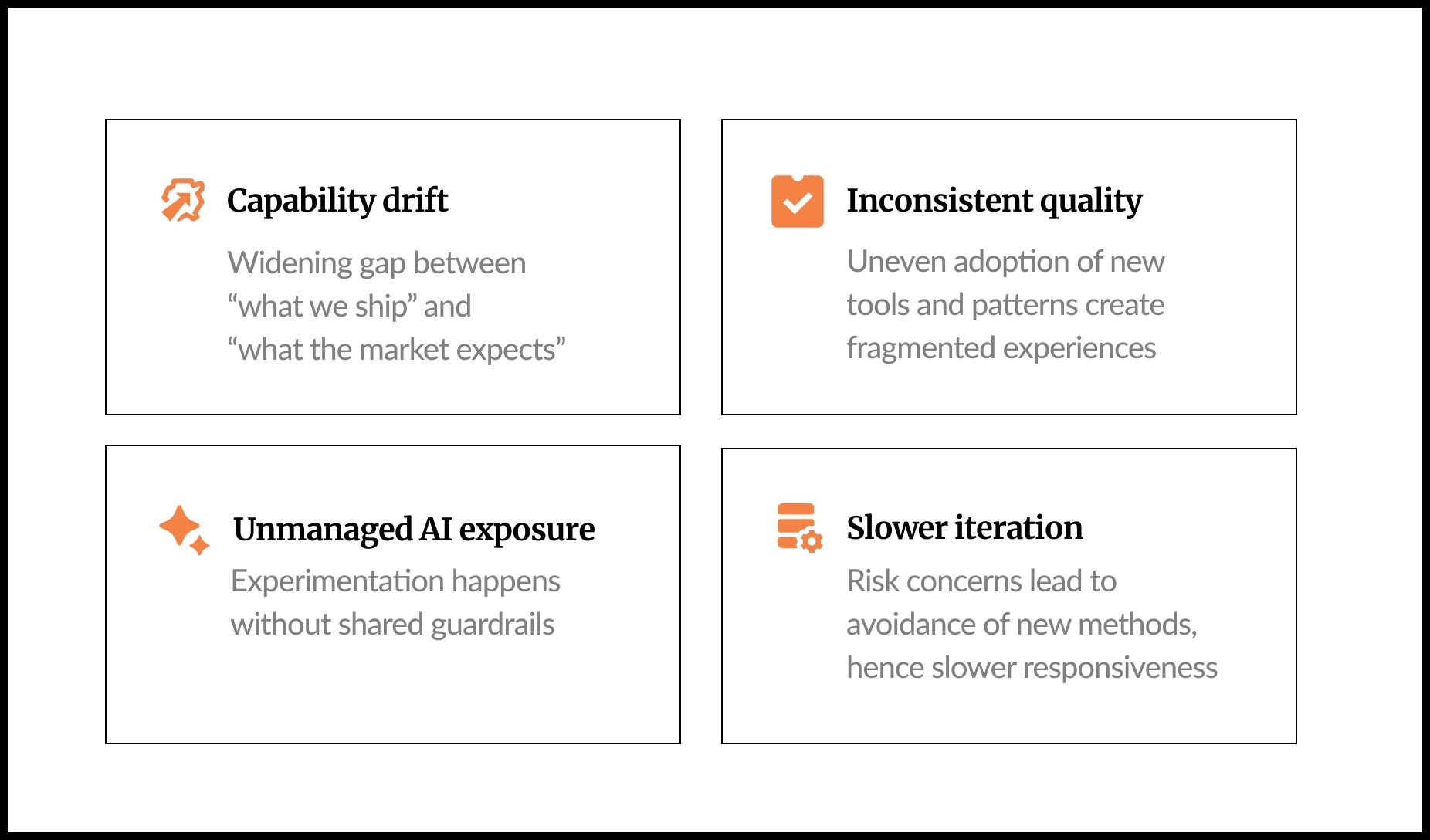

If continuous learning is unmanaged, enterprises typically see predictable failure modes:

- Capability drift: teams continue delivering, but the gap between “what we ship” and “what the market expects” widens.

- Inconsistent quality: different product areas adopt new tools and patterns unevenly, creating fragmented experiences.

- Unmanaged AI exposure: experimentation happens without shared guardrails, documentation, or review pathways.

- Slower iteration: organizations avoid new methods out of risk concerns, then lose speed and responsiveness.

How Are AI and Automation Changing Design Capability Requirements?

AI shifts design from craft execution toward system-level oversight. Designers increasingly need to evaluate bias, explainability, data governance, and repeatability, not only usability and aesthetics. Capability gaps here introduce operational, legal, and reputational risk.

AI is moving from isolated experimentation to everyday enablement: research synthesis, prototyping, content drafting, personalization, and decision support. Exactly how fast this happens varies by organization, because enterprises must contend with security reviews, procurement, privacy constraints, and regulatory considerations.

Smaller organizations often adopt new tools faster because they have fewer governance dependencies; enterprises move more deliberately because the cost of mistakes is higher.

The goal is not “faster adoption.” The goal is controlled adoption. To understand why control over speed is the key to AI modernization, this guide will give you a clear perspective.

For design capability, this means:

- Teams must assess not just the interface, but the system producing the interface (data inputs, model behavior, failure modes).

- Teams need ways to explain why a decision was made and how outputs were generated (especially for AI-assisted artifacts).

- Without common practices, AI use becomes inconsistent, prompting, content policies, accessibility handling, and review steps vary by team.

This is where the Continuous Capability Model becomes practical:

- Literacy: AI fundamentals for non-machine learning roles (limitations, hallucinations, bias risk, privacy basics). Define the baseline of your team’s literacy.

- Standards: what “good” looks like for AI-assisted UX writing, layouts, recommendations, and disclosures.

- Governance: when to involve legal, risk, compliance, security; how to document usage; what’s prohibited.

- Infrastructure: training pathways, review forums, and measurement.

How Are User Expectations Redefining Design Standards?

User expectations now include real-time responsiveness, cross-channel continuity, and intelligent assistance. Fragmented experiences can lead to distrust in an organization. Design standards must evolve to address continuity, trust, accessibility, and transparency across channels.

Technology changes are only half of the story. User behavior changes just as quickly, and expectations rise as soon as users experience better patterns elsewhere.

Common enterprise pressure points include:

- Cross-channel continuity: users expect a task started in one channel to resolve cleanly in another (web → mobile → support → in-person).

- Consistency and predictability: design systems are no longer “nice to have”; they reduce cognitive load and increase trust.

- Intelligent assistance: conversational patterns and AI-enabled help shift how users search, compare, and complete tasks.

- Trust and transparency: as automation increases, users look for clarity: what’s happening, why, and what choices they have.

- Accessibility as baseline: higher expectations and regulatory scrutiny make accessibility standards non-negotiable.

Fragmentation becomes a proxy for how well the organization works. When experiences feel stitched together, users infer internal silos, unreliable data, or weak accountability.

For design teams, continuous learning is how standards keep pace:

- new interaction models (including conversational flows),

- updated accessibility guidance,

- improved trust signals and disclosures,

- better service blueprints that connect channels.

In 2026, there are certain actions organizations can take to design customer experiences that build trust and loyalty. Read about these in this article: 2026 Design Trends for Building Trust, Loyalty, and Effortless CX.

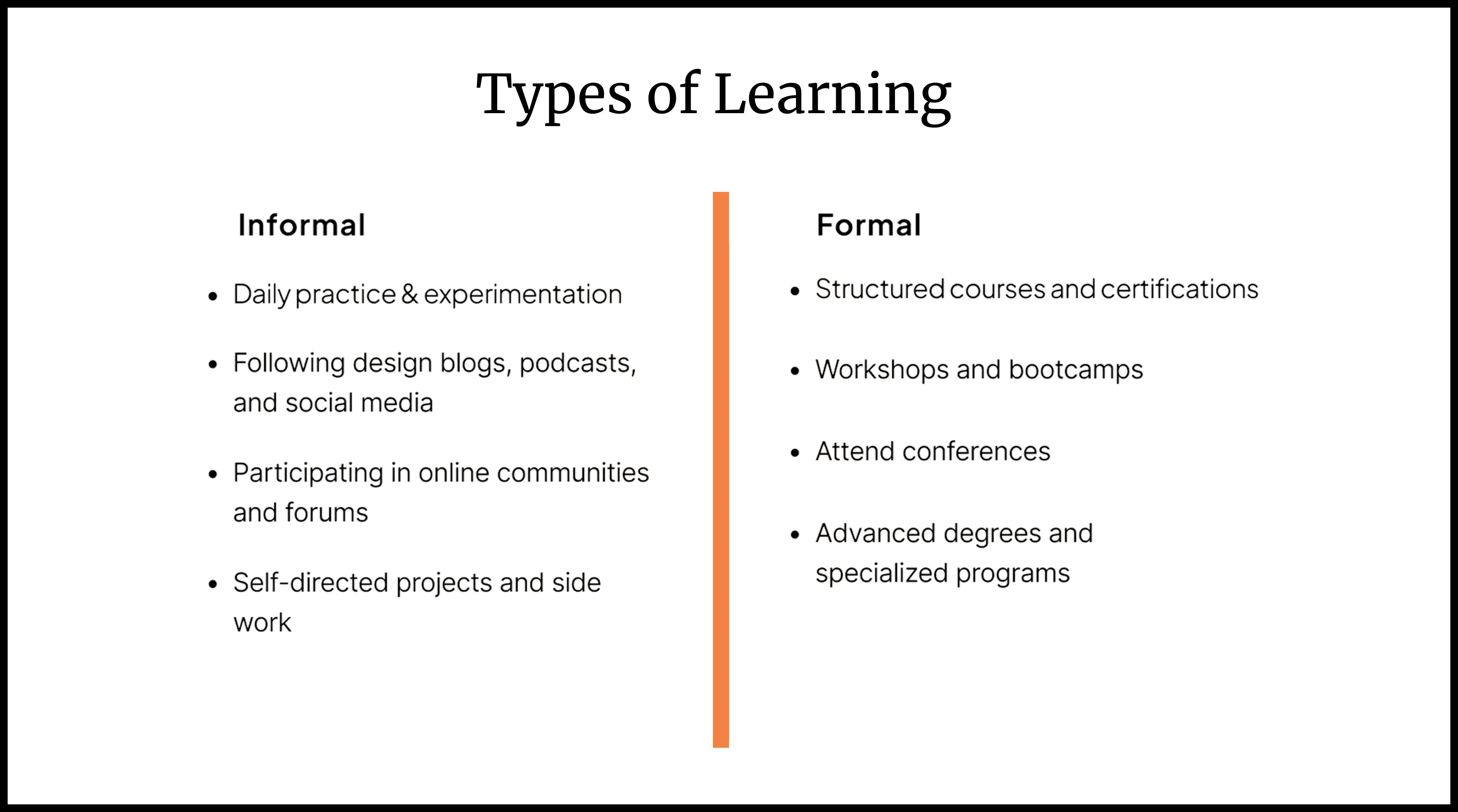

What Types of Learning Actually Build Enterprise Capability?

Enterprise capability requires both informal experimentation and formal structured education. Informal learning builds fluency and speed; formal learning creates shared language, governance alignment, and strategic depth. High-performing teams intentionally balance both rather than relying on ad hoc upskilling.

People learn differently, and enterprises need multiple pathways. But the bigger distinction is what the learning is for: individual skill growth or institutional capability.

Informal learning: fluency, speed, and practical intuition

Informal learning is where teams build confidence with new tools and patterns, especially in low-risk environments:

- internal prototypes and sandboxes

- time-boxed experiments

- reading groups and practitioner discussions

- peer demos of “what worked / what failed”

- lightweight pilots that don’t touch sensitive data

Informal learning is also where teams discover edge cases early: prompt instability, accessibility gaps in generated content, tone drift, or failure patterns that don’t show up in ideal demos.

Formal learning: shared language, alignment, and governance fit

Formal learning creates coherence across teams, critical in enterprises where consistency and compliance matter:

- structured courses (AI basics for product teams, responsible AI, data privacy concepts)

- workshops (service design, systems thinking, DesignOps, AI-assisted research practices)

- role-based training (leaders vs. IC designers vs. researchers vs. content)

- certification pathways where relevant (used selectively, tied to operating needs)

The leadership question to ask is: Are learning investments tied to strategic priorities?

If the roadmap includes AI-enabled experiences, modernization, or governance strengthening, learning should map directly to those outcomes rather than floating as a generic “upskilling initiative.”

How Do Communities of Practice Prevent Fragmentation?

Communities of practice turn individual experimentation into institutional standards. They provide a forum to review AI usage, document edge cases, align on governance guardrails, and share repeatable patterns. This reduces fragmented tool adoption and improves quality control.

Individual learning is necessary but insufficient. Enterprises win on collective capability: shared methods, shared standards, shared risk posture.

A community of practice (CoP) is not a project team. It is a capability forum with:

- a defined domain (e.g., AI-assisted research, design systems, content design, accessibility)

- clear intent (standardize, learn, de-risk, accelerate)

- predictable cadence

- knowledge sharing and peer critique

- documentation outputs (playbooks, guidelines, pattern updates)

In AI-enabled work, communities of practice are particularly valuable because they:

- normalize transparency about what tools are being used and where

- surface governance concerns early (privacy, IP, compliance, data handling)

- create shared prompt and workflow patterns for repeatability

- review edge cases and failure modes across products

- reduce “shadow adoption” where teams quietly use tools without alignment

Critique also changes. When AI contributes to drafts (content, layout, ideation), feedback must include:

- accuracy and hallucination risk

- bias and exclusion risk

- explainability and disclosures

- alignment with internal standards and brand

- accessibility and readability implications

Designing a Learning Plan at the Organizational Level

Leaders operationalize continuous learning by tying it to measurable capability goals, assigning ownership, allocating time, and creating accountability forums. Learning becomes real when it is scheduled, measured, documented, and governed, like any other strategic program.

Continuous learning fails when it remains abstract. Enterprises do not lack learning options; they lack operational design for learning.

A practical approach mirrors what individual designers might do, scaled appropriately:

1. Learning Audit

Start with a learning audit. Assess current skills across design, product, data, and AI. Identify gaps relative to strategic objectives. Where is literacy strong? Where is it thin? Are teams equipped to evaluate AI tools responsibly? Do leaders understand the governance implications of automation?

2. Resource Exploration

Curate a balanced mix of informal and formal pathways that align with your operating context. This may include internal workshops, curated reading lists on responsible design, targeted certifications, or the formation of new communities of practice.

3. Defined Roadmaps

Then define a roadmap. Over a defined horizon (such as six months), articulate specific learning goals, selected resources, realistic time commitments, milestones, and budget considerations. Make ownership explicit.

4. Accountability structures

Finally, build accountability structures. Establish regular forums for knowledge sharing and critique. Encourage documentation of experiments and lessons learned. Revisit progress and adjust as needed.

The objective is not to turn every designer into a data scientist or AI engineer. It is to ensure that your organization can design responsibly and effectively in an environment shaped by automation, data, and evolving user behavior. For a deeper dive into designing transparent AI-powered experiences that not only maintain but win customer loyalty, read this article.

Final Takeaways

Continuous learning is now enterprise design infrastructure: it reduces risk, prevents fragmentation, and keeps capability aligned with AI, governance, and rising user expectations.

Leaders should operationalize learning with a framework, measurable outcomes, and accountability, not ad hoc training.

- Continuous learning is a business requirement because capability drift creates quality, trust, and governance risk in digital-first environments.

- AI raises the bar from “craft execution” to system-level oversight—requiring literacy, standards, and governance readiness.

- User expectations now demand cross-channel continuity, intelligent assistance, and trust signals; design standards must evolve accordingly.

- The Continuous Capability Model (Literacy → Standards → Governance → Infrastructure) makes learning governable at scale.

- Roadmaps works when learning is tied to strategic priorities, measured through outcomes, and reinforced through communities of practice.

Professional designers have always been continuous learners. The difference now is the speed and scale at which design decisions propagate. In an AI-enabled landscape, learning is no longer an individual virtue. It is a strategic discipline that underpins product quality, governance, and long-term resilience.